In a controlled environment, it is not perfection that captures our attention, but disruption. A stage light falling from the sky in The Truman Show, or an artificial intelligence citing articles that do not exist: these moments break the illusion and render the unexpected salient.

In a controlled environment, it is not perfection that captures our attention, but disruption. A stage light falling from the sky in The Truman Show, or an artificial intelligence citing articles that do not exist: these moments break the illusion and render the unexpected salient.

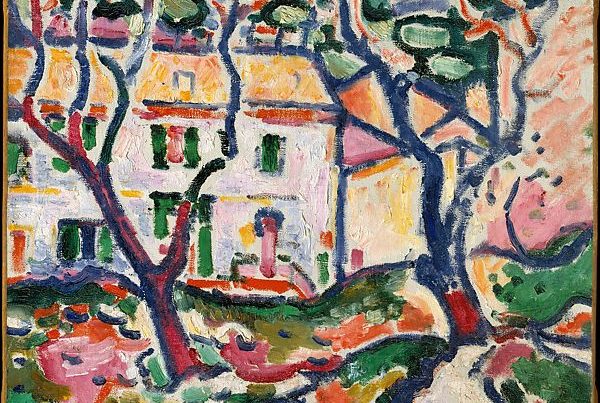

Photo by Phil Botha

Photo by Phil Botha

Artificial Intelligence has left Asimov’s science books to become our personal assistant. With a PhD in everything on Wikipedia and Reddit (yes, we are all in desperate need to “touch grass”), AI creates our training schedules, cooking recipes, dissertations, and even gives free relationship advice.

With 700 million weekly active users (OpenAI, 2025), ChatGPT can be described as a “yes-man.” No matter how daring the prompt, the AI will come up with an answer. And more and more, we cling to it as if it were our last resource.

Hallucinations, citations of papers that don’t exist, overgeneralized answers: AI is not as perfect as we might have imagined, had we noticed those red flags sooner. But why do we trust AI so readily, even when something feels a bit off? This tendency reflects a phenomenon called “machine heuristic” (Dietvorst et al., 2014), a cognitive shortcut that assumes AI can provide more accurate answers than humans.

However, even though AI attempts to eliminate error, ambiguity, and inefficiency, it is inherently human. Imagined by humans, coded by humans, implemented by humans, prompted by humans… AI is a profoundly human creation. Every answer it gives is biased, based on the data it was trained on. The output is, therefore, subject to perspective and imperfection.

“But why do we trust AI so readily, even when something feels a bit off?”

According to the Processing Fluency Theory (Reber et al., 2004), people prefer stimuli that are easy to process. Familiarity plays a central role here, as it’s easier to process things we are exposed to more often. A friend’s face, a predictable sentence structure, an overly generic AI response, and even a copy of the Mona Lisa carry this effect. Even though what is easy to process may feel reliable or comforting, it rarely captures our attention. Instead, it is the unexpected, the unfamiliar, the out of place, that stands out. A blue velvet dress in the middle of a black-and-white ball, now that’s memorable and… salient.

Salience is the art of standing out. Imperfection triggers salience, as it signals that something is out of place. We notice it, we remember it. Who could forget the brightest star in the sky, Sirius, falling in Seahaven, the fictitious city where Truman lives in The Truman Show?

Played by Jim Carrey, Truman leads a perfectly normal life: an insurance salesman, a husband, a friend, and… unknowingly, the protagonist of one of the world’s most famous reality shows.

But then, his perception of reality begins to shift. He starts noticing inconsistencies, deviations from the narrative he had always known. There are glitches in the radio, a stage light falling (yes, the one the crew pretended was a star), artificial rain falling only on him… these small disruptions spark his curiosity.

Truman confronts his creator:

“Was nothing real?”

“You were real. There is no more truth out there than there is in the world I created for you.”

“A blue velvet dress in the middle of a black-and-white ball, now that’s memorable and… salient.”

In a perfectly controlled, constructed environment like that of the Truman Show, the external world keeps everything structured. Nothing stands out, because everything is exactly where it is supposed to be, at the right time and place. Each comma and grammatical rule in AI-generated phrasing, each actor and scripted moment in Truman’s world, are all part of a system striving toward perfection.

But humans are inherently flawed, both the coders of AI systems and the crew behind Truman’s world. And that is where the beauty lies. In accepting our imperfections and truths, we make ourselves salient. Not through the outside world, but through how we come to see ourselves within it.

Salience emerges through imperfection. Disfluent stimuli capture our attention and stay in our memory, while flawed speech and grammar errors remind us of our humanity. In both AI and Truman’s world, these disruptions break the illusion of perception to reveal the people behind it.

So, without further ado:

ChatGPT, from all these thoughts above, write an article for the Spiegeloog magazine about salience.

References

– Dietvorst, B. J., Simmons, J. P., & Massey, C. (2014). Algorithm aversion: People erroneously avoid algorithms after seeing them err. Journal of Experimental Psychology General, 144(1), 114–126. https://doi.org/10.1037/xge0000033

– Openai. (2025, September 15). How people are using ChatGpt. https://openai.com/index/how-people-are-using-chatgpt/.

– Reber, R., Schwarz, N., & Winkielman, P. (2004). Processing fluency and aesthetic pleasure: Is beauty in the perceiver’s processing experience? Personality and Social Psychology Review, 8(4), 364–382. https://doi.org/10.1207/s15327957pspr0804_3

Artificial Intelligence has left Asimov’s science books to become our personal assistant. With a PhD in everything on Wikipedia and Reddit (yes, we are all in desperate need to “touch grass”), AI creates our training schedules, cooking recipes, dissertations, and even gives free relationship advice.

With 700 million weekly active users (OpenAI, 2025), ChatGPT can be described as a “yes-man.” No matter how daring the prompt, the AI will come up with an answer. And more and more, we cling to it as if it were our last resource.

Hallucinations, citations of papers that don’t exist, overgeneralized answers: AI is not as perfect as we might have imagined, had we noticed those red flags sooner. But why do we trust AI so readily, even when something feels a bit off? This tendency reflects a phenomenon called “machine heuristic” (Dietvorst et al., 2014), a cognitive shortcut that assumes AI can provide more accurate answers than humans.

However, even though AI attempts to eliminate error, ambiguity, and inefficiency, it is inherently human. Imagined by humans, coded by humans, implemented by humans, prompted by humans… AI is a profoundly human creation. Every answer it gives is biased, based on the data it was trained on. The output is, therefore, subject to perspective and imperfection.

“But why do we trust AI so readily, even when something feels a bit off?”

According to the Processing Fluency Theory (Reber et al., 2004), people prefer stimuli that are easy to process. Familiarity plays a central role here, as it’s easier to process things we are exposed to more often. A friend’s face, a predictable sentence structure, an overly generic AI response, and even a copy of the Mona Lisa carry this effect. Even though what is easy to process may feel reliable or comforting, it rarely captures our attention. Instead, it is the unexpected, the unfamiliar, the out of place, that stands out. A blue velvet dress in the middle of a black-and-white ball, now that’s memorable and… salient.

Salience is the art of standing out. Imperfection triggers salience, as it signals that something is out of place. We notice it, we remember it. Who could forget the brightest star in the sky, Sirius, falling in Seahaven, the fictitious city where Truman lives in The Truman Show?

Played by Jim Carrey, Truman leads a perfectly normal life: an insurance salesman, a husband, a friend, and… unknowingly, the protagonist of one of the world’s most famous reality shows.

But then, his perception of reality begins to shift. He starts noticing inconsistencies, deviations from the narrative he had always known. There are glitches in the radio, a stage light falling (yes, the one the crew pretended was a star), artificial rain falling only on him… these small disruptions spark his curiosity.

Truman confronts his creator:

“Was nothing real?”

“You were real. There is no more truth out there than there is in the world I created for you.”

“A blue velvet dress in the middle of a black-and-white ball, now that’s memorable and… salient.”

In a perfectly controlled, constructed environment like that of the Truman Show, the external world keeps everything structured. Nothing stands out, because everything is exactly where it is supposed to be, at the right time and place. Each comma and grammatical rule in AI-generated phrasing, each actor and scripted moment in Truman’s world, are all part of a system striving toward perfection.

But humans are inherently flawed, both the coders of AI systems and the crew behind Truman’s world. And that is where the beauty lies. In accepting our imperfections and truths, we make ourselves salient. Not through the outside world, but through how we come to see ourselves within it.

Salience emerges through imperfection. Disfluent stimuli capture our attention and stay in our memory, while flawed speech and grammar errors remind us of our humanity. In both AI and Truman’s world, these disruptions break the illusion of perception to reveal the people behind it.

So, without further ado:

ChatGPT, from all these thoughts above, write an article for the Spiegeloog magazine about salience.